Automated Web Patrol with Strider HoneyMonkeys:

Finding Web Sites That Exploit Browser Vulnerabilities

Yi-Min Wang, Doug Beck, Xuxian Jiang, and Roussi Roussev

Cybersecurity and Systems Management Research Group

Microsoft Research, Redmond, Washington

Abstract

Internet attacks that use Web servers to exploit browser vulnerabilities to install malware programs are on

the rise [D04,R04,B04,S05]. Several recent reports suggested that some companies may actually be building a

business model around such attacks [IF05,R05]. Expensive, manual analyses for individually discovered

malicious Web sites have recently emerged [F04,G05]. In this paper, we introduce the concept of Automated

Web Patrol, which aims at significantly reducing the cost for monitoring malicious Web sites to protect

Internet users. We describe the design and implementation of the Strider HoneyMonkey Exploit Detection

System [L05,N05], which consists of a network of monkey programs running on virtual machines with

different patch levels and constantly patrolling the Web to hunt for Web sites that exploit browser

vulnerabilities.

Within the first month of utilizing this new system, we identified 752 unique URLs that are operated by

287 Web sites and that can successfully exploit unpatched WinXP machines. The system automatically

constructs topology graphs that capture the connections between the exploit sites based on traffic redirection,

which leads to the identification of several major players who are responsible for a large number of exploit

pages.

1. Introduction

Internet attacks that use a malicious, hacked, or infected Web server to exploit unpatched client-side

vulnerabilities of visiting browsers are on the rise. Many attacks in the past 12 months fell into this category,

including Download.Ject [D04], Bofra [R04], and Xpire.info [B04]. Such attacks allow the attackers to install

malware programs without requiring any user interaction. Manual analyses of exploit sites have recently

emerged [F04,G05,IF05,R05,S05,T05]. Although they often provide very useful and detailed information

about which vulnerabilities are exploited and which malware programs are installed, such analysis efforts are

not scalable and do not provide a comprehensive picture of the problem.

In this paper, we introduce the concept of Automated Web Patrol that involves a network of automated

agents actively patrolling the Web to find malicious Web sites. We describe the design and implementation of

the Strider HoneyMonkey Exploit Detection System that uses active client honeypots [H,HC] to perform

- 2 -

automated Web patrol with the specific goal of finding Web sites that exploit browser vulnerabilities. The

system consists of a pipeline of monkey programs running on Virtual Machines (VMs) with different patch

levels in order to detect exploit sites with different capabilities.

Within the first month, we identified 752 unique URL links, operated by 287 Web sites, that can

successfully exploit unpatched WinXP machines. We present a preliminary analysis of the data and suggest

what can be done based on the data to improve Internet safety.

2. Automated Web Patrol with the Strider HoneyMonkey Exploit Detection System

Although the general idea of crawling the Web to look for pages of particular interest is fairly

straightforward, we have found that a combination of the following key ideas are most crucial for increasing

the “hit rate” and making the concept of Web patrol useful in practice:

1. Where Do We Start? There are 10 billion Web pages out there and most of them do not exploit

browser vulnerabilities. So, it is very important to start with a list of URLs that are most likely to

generate hits. The approach we took was to collect an initial list of 5000+ potentially malicious URLs

by doing a Web search for Windows “hosts” files [HF] that are used to block advertisements and bad

sites, and lists of known-bad Web sites that host some of the most malicious spyware programs

[CWS05].

2. How Do We Detect An Exploit? One method of detecting a browser exploit is to study all known

vulnerabilities and build signature-based detection code for each. Since this approach is fairly

expensive, we decided to lower the cost of Web patrol by utilizing a black-box approach: we run a

monkey program1 with the Strider Flight Data Recorder (FDR) [W03] to efficiently record every single

file and Registry read/write. The monkey launches a browser instance for each suspect URL and wait

for a few minutes. The monkey is not set up to click on any dialog box to permit installation of any

software; consequently, any executable files that get created outside the browser’s temporary folder are

detected by the FDR and signal an exploit. Such a black-box approach has an important advantage: it

allows the detection of known-vulnerability exploits and zero-day exploits in a uniform way, through

monkeys with different patch levels. Each monkey also runs with the Strider Gatekeeper [W04] to

detect any hooking of Auto-Start Extensibility Points (ASEPs) that may not involve creation of

executables, and with Strider GhostBuster [W05] to detect stealth malware that hide processes and

ASEP hooks.

____________

An automation-enabled program such as the Internet Explorer browser allows programmatic access to most of the

operations that can be invoked by a user. A “monkey program” is a program that drives the browser in a way that mimics

human user’s operation.

- 3 -

To ease cleanup of infected state, we run the monkey inside a VM. Upon detecting an exploit, the

monkey persists its data and notifies the Monkey Controller, running on the host machine, to destroy

the infected VM and restart a clean VM. The restarted VM automatically launches the monkey, which

then continues to visit the remaining URL list. The Monkey Controller also passes the detected exploit

URL to the next monkey in the pipeline to continue investigating the strength of the exploit. When the

end-of-the-pipeline monkey, running on a fully patched VM, reports a URL as an exploit, the URL is

upgraded to a zero-day exploit and the malware programs that it installed are immediately investigated

and passed on to the Microsoft Security Response Center.

3. How Do We Expand And Guide The Search? The initial list may contain only a small number of

hits, so it is important to have an effective guided search in order to grow the patrolled area to increase

coverage. We have found that, usually, the links displayed on an exploit page have a higher probability

of being exploit pages as well because people in the exploit business like to refer to each other to

increase traffic. We take advantage of this by doing Web crawling through those links to generate

bigger “bad neighborhoods”. More importantly, we have observed that many of the exploit pages

automatically redirect visiting browsers to a number of other pages, each of which may try a different

exploit or install different malware programs. We take advantage of this by tracking such redirections

to enable automatic derivation of their business relationship: content providers are responsible for

attracting browser traffic and selling/redirecting the traffic to exploit providers, which specialize in and

are responsible for actually performing the exploits to install malware.

3. Exposing and Analyzing The Exploit Sites

3.1. The Importance of Software Patching

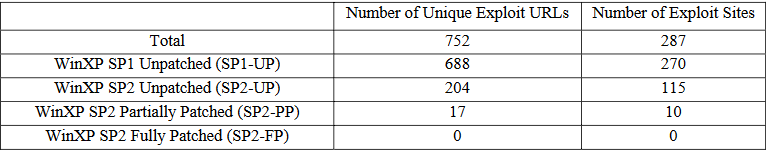

Figure 1 shows the breakdown of the 752 Internet Explorer browser-based exploit URLs among different

service-pack (SP1 or SP2) and patch levels, where “UP” stands for “UnPatched”, “PP” stands for “Partially

Patched”, and “FP” stands for “Fully Patched”. As expected, the SP1-UP number is much higher than the SP2-

UP number because the former has more vulnerabilities and they have existed for a longer time.

The SP2-PP numbers are the numbers of exploit pages and sites that successfully exploited a WinXP SP2

machine partially patched up to early 2005. The fact that the number is one order of magnitude lower than the

SP2-UP number demonstrates the importance of keeping software up to date. But since the number is still not

negligible, enterprise corporate proxies may want to black-list these sites while the latest updates are still being

tested for deployment and ISPs may want to block access to these sites to give their consumer customers more

time to catch up with the updates

Finding Web Sites That Exploit Browser Vulnerabilities

Yi-Min Wang, Doug Beck, Xuxian Jiang, and Roussi Roussev

Cybersecurity and Systems Management Research Group

Microsoft Research, Redmond, Washington

Abstract

Internet attacks that use Web servers to exploit browser vulnerabilities to install malware programs are on

the rise [D04,R04,B04,S05]. Several recent reports suggested that some companies may actually be building a

business model around such attacks [IF05,R05]. Expensive, manual analyses for individually discovered

malicious Web sites have recently emerged [F04,G05]. In this paper, we introduce the concept of Automated

Web Patrol, which aims at significantly reducing the cost for monitoring malicious Web sites to protect

Internet users. We describe the design and implementation of the Strider HoneyMonkey Exploit Detection

System [L05,N05], which consists of a network of monkey programs running on virtual machines with

different patch levels and constantly patrolling the Web to hunt for Web sites that exploit browser

vulnerabilities.

Within the first month of utilizing this new system, we identified 752 unique URLs that are operated by

287 Web sites and that can successfully exploit unpatched WinXP machines. The system automatically

constructs topology graphs that capture the connections between the exploit sites based on traffic redirection,

which leads to the identification of several major players who are responsible for a large number of exploit

pages.

1. Introduction

Internet attacks that use a malicious, hacked, or infected Web server to exploit unpatched client-side

vulnerabilities of visiting browsers are on the rise. Many attacks in the past 12 months fell into this category,

including Download.Ject [D04], Bofra [R04], and Xpire.info [B04]. Such attacks allow the attackers to install

malware programs without requiring any user interaction. Manual analyses of exploit sites have recently

emerged [F04,G05,IF05,R05,S05,T05]. Although they often provide very useful and detailed information

about which vulnerabilities are exploited and which malware programs are installed, such analysis efforts are

not scalable and do not provide a comprehensive picture of the problem.

In this paper, we introduce the concept of Automated Web Patrol that involves a network of automated

agents actively patrolling the Web to find malicious Web sites. We describe the design and implementation of

the Strider HoneyMonkey Exploit Detection System that uses active client honeypots [H,HC] to perform

- 2 -

automated Web patrol with the specific goal of finding Web sites that exploit browser vulnerabilities. The

system consists of a pipeline of monkey programs running on Virtual Machines (VMs) with different patch

levels in order to detect exploit sites with different capabilities.

Within the first month, we identified 752 unique URL links, operated by 287 Web sites, that can

successfully exploit unpatched WinXP machines. We present a preliminary analysis of the data and suggest

what can be done based on the data to improve Internet safety.

2. Automated Web Patrol with the Strider HoneyMonkey Exploit Detection System

Although the general idea of crawling the Web to look for pages of particular interest is fairly

straightforward, we have found that a combination of the following key ideas are most crucial for increasing

the “hit rate” and making the concept of Web patrol useful in practice:

1. Where Do We Start? There are 10 billion Web pages out there and most of them do not exploit

browser vulnerabilities. So, it is very important to start with a list of URLs that are most likely to

generate hits. The approach we took was to collect an initial list of 5000+ potentially malicious URLs

by doing a Web search for Windows “hosts” files [HF] that are used to block advertisements and bad

sites, and lists of known-bad Web sites that host some of the most malicious spyware programs

[CWS05].

2. How Do We Detect An Exploit? One method of detecting a browser exploit is to study all known

vulnerabilities and build signature-based detection code for each. Since this approach is fairly

expensive, we decided to lower the cost of Web patrol by utilizing a black-box approach: we run a

monkey program1 with the Strider Flight Data Recorder (FDR) [W03] to efficiently record every single

file and Registry read/write. The monkey launches a browser instance for each suspect URL and wait

for a few minutes. The monkey is not set up to click on any dialog box to permit installation of any

software; consequently, any executable files that get created outside the browser’s temporary folder are

detected by the FDR and signal an exploit. Such a black-box approach has an important advantage: it

allows the detection of known-vulnerability exploits and zero-day exploits in a uniform way, through

monkeys with different patch levels. Each monkey also runs with the Strider Gatekeeper [W04] to

detect any hooking of Auto-Start Extensibility Points (ASEPs) that may not involve creation of

executables, and with Strider GhostBuster [W05] to detect stealth malware that hide processes and

ASEP hooks.

____________

An automation-enabled program such as the Internet Explorer browser allows programmatic access to most of the

operations that can be invoked by a user. A “monkey program” is a program that drives the browser in a way that mimics

human user’s operation.

- 3 -

To ease cleanup of infected state, we run the monkey inside a VM. Upon detecting an exploit, the

monkey persists its data and notifies the Monkey Controller, running on the host machine, to destroy

the infected VM and restart a clean VM. The restarted VM automatically launches the monkey, which

then continues to visit the remaining URL list. The Monkey Controller also passes the detected exploit

URL to the next monkey in the pipeline to continue investigating the strength of the exploit. When the

end-of-the-pipeline monkey, running on a fully patched VM, reports a URL as an exploit, the URL is

upgraded to a zero-day exploit and the malware programs that it installed are immediately investigated

and passed on to the Microsoft Security Response Center.

3. How Do We Expand And Guide The Search? The initial list may contain only a small number of

hits, so it is important to have an effective guided search in order to grow the patrolled area to increase

coverage. We have found that, usually, the links displayed on an exploit page have a higher probability

of being exploit pages as well because people in the exploit business like to refer to each other to

increase traffic. We take advantage of this by doing Web crawling through those links to generate

bigger “bad neighborhoods”. More importantly, we have observed that many of the exploit pages

automatically redirect visiting browsers to a number of other pages, each of which may try a different

exploit or install different malware programs. We take advantage of this by tracking such redirections

to enable automatic derivation of their business relationship: content providers are responsible for

attracting browser traffic and selling/redirecting the traffic to exploit providers, which specialize in and

are responsible for actually performing the exploits to install malware.

3. Exposing and Analyzing The Exploit Sites

3.1. The Importance of Software Patching

Figure 1 shows the breakdown of the 752 Internet Explorer browser-based exploit URLs among different

service-pack (SP1 or SP2) and patch levels, where “UP” stands for “UnPatched”, “PP” stands for “Partially

Patched”, and “FP” stands for “Fully Patched”. As expected, the SP1-UP number is much higher than the SP2-

UP number because the former has more vulnerabilities and they have existed for a longer time.

The SP2-PP numbers are the numbers of exploit pages and sites that successfully exploited a WinXP SP2

machine partially patched up to early 2005. The fact that the number is one order of magnitude lower than the

SP2-UP number demonstrates the importance of keeping software up to date. But since the number is still not

negligible, enterprise corporate proxies may want to black-list these sites while the latest updates are still being

tested for deployment and ISPs may want to block access to these sites to give their consumer customers more

time to catch up with the updates